Health & Medicine

4 ways tech can help your mental health

Big data and personal sensing technology are revolutionising psychology, opening new frontiers in our understanding of how our minds work and how we treat mental illness

Published 19 February 2018

When you think about it, your smart phone knows you in a way that no one else can, even your nearest and dearest. Even your psychologist.

Your phone can track where you are, how you are moving, what you are seeing, what you are hearing and, if linked to an activity tracker, how you are sleeping. It can even monitor your emails, texts and phone calls to assess how social you are.

It may sound like Big Brother, but when governed by privacy protocols this wearable sensing, combined with big data computing, is the closest we’ve yet come to cataloguing our lived experience. It promises to uncover new information on how we think, learn, use language and recall memories, and better understand and treat mental illnesses. And it is already happening.

University of Melbourne researchers are using people’s phones to track the lives of patients with bipolar disorder to understand, monitor and even predict the sudden swings between their manic and depressive episodes. In another experiment investigating how our memory works, participants are wearing their phones around their necks constantly for two weeks at a time.

Health & Medicine

4 ways tech can help your mental health

“There has never been a better time to be a psychological scientist,” says Professor Simon Dennis, a computer and psychological researcher at the University, who is running both experiments. “We can now start to capture people’s objective experiences in a real way.”

At a time when advances in neuroscience and imaging are revolutionising our knowledge of how our brain works from the inside, Professor Dennis says the emergence of real time sensing and big data is a similarly revolutionary tool. But instead of giving us an inside view of our minds, he says it offers an unprecedented look at what is going into our minds.

“The advances in neuroscience have led to a lot of new thinking and knowledge of how our brains function. But the focus is on looking inside our heads when in fact our minds are always reflecting the environment around us,” says Professor Dennis.

“If you really want to understand the mind you have to look externally at the objective experience as well as internally at the brain itself. They are flipsides of the same coin. The opportunity of big data and the new sensing technology is that we can now start to capture that experience in a real way.”

Professor Dennis is the director of the University’s new Complex Human Data Hub that is drawing together psychological science and computer science, along with mathematics to collect and harness big data on how we live and think.

Previously psychologists have been limited to understanding people through laboratory tests and clinical visits, neither of which accurately capture a person’s lived reality. For a start, people in a lab or clinic won’t always be reliable in their feedback. Environments like this are artificial and people can forget, lie, tell researchers what they think they want to hear, or be misinterpreted. Indeed, conditions like bipolar and schizophrenia are associated with some forgetfulness which complicates feedback from clinic visits.

Health & Medicine

Do you really get sick as soon as you go on holiday?

Experience sampling like this isn’t new, but wearable sensing takes it to a whole new level. Psychologists have in the past made effective use of surveys that require people to note how they are feeling at different times. This “active” experience sampling is essential in collecting subjective data like someone’s mood. But it is also artificial in that it requires the subject to respond to a prompt. The advantage of sensors is that they allow “passive” experience sampling in which the data isn’t compromised by the subject having to stop and act. They can just be themselves.

For example, an activity tracker linked to a phone that suggests someone with bipolar isn’t sleeping may signal a manic episode. Global positioning data on a phone indicating that someone is staying holed up at home could signal a depressive episode. Similarly, a depressive episode may be on the way if the intermittent mic on the phone suggests they aren’t speaking to anyone.

The system uses a phone app developed by Professor Dennis and services from device-connecting company IFTTT. For legal and privacy reasons audio is limited to intermittent grabs and is scrambled so the researcher can only know whether someone is speaking, not what they’re saying. Similarly, researchers will only be able to see that emails, text messages, and phones calls have been logged – they don’t have access to the content. In the memory experiment the system is extended to the visual by using the camera phone, and like the audio the stills are taken intermittently.

Health & Medicine

(Don’t) always look on the bright side of life

The Hub’s deputy director, Associate Professor Amy Perfors, says sensors and the monitoring of online social behaviour through platforms like Facebook and Twitter, in combination with experience sampling, can create a rich stream of data that can be aggregated and mathematically modelled to draw out patterns. This data, she says, is a gold mine for researchers who are interested in how people socially interact and acquire and use language.

“People are social and we create each other’s environments and culture. Tools like these can capture the input that is shaping our behaviour and will eventually enable psychologists to better understand how it all happens.

“By knowing what an individual’s actual experience is we will be able to model how people interact, such as what makes them decide to talk to someone, what they decide to share with someone, what they learn from each other, what social structures lead to knowledge exchange…we can delve into some deep questions.”

Sciences & Technology

Why smells trigger your memories

For example, how do we acquire language? Why are some people better at it than others? Why is second-language acquisition usually more difficult? Associate Professor Perfors says the exact processes aren’t completely understood, but we do know that our environment and the language we hear is crucial in some way. “By being able to capture that environment and monitor language acquisition we could be able to nail that question.”

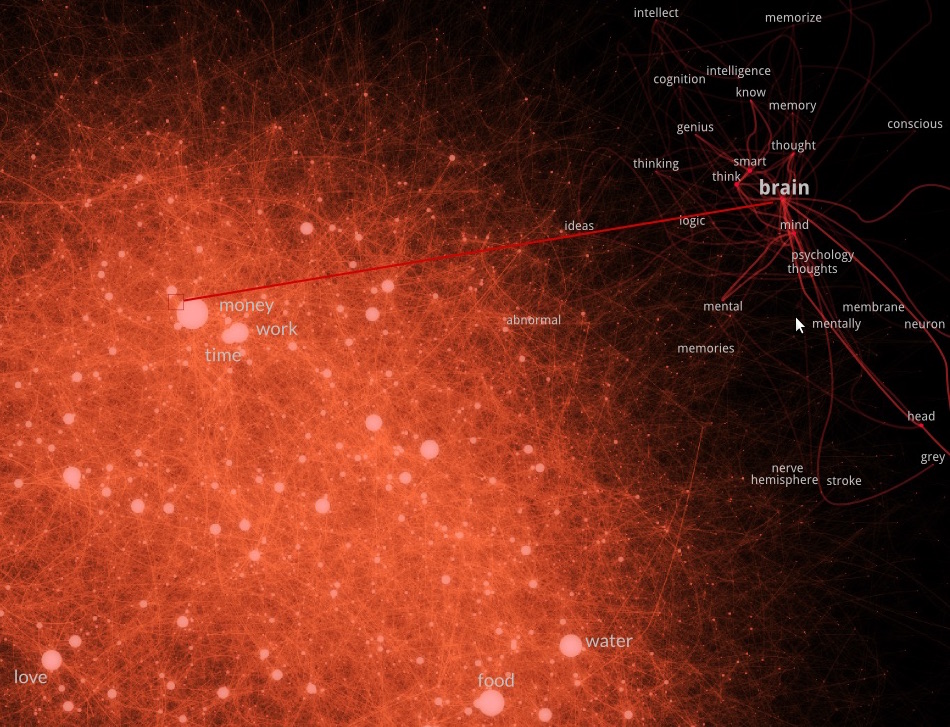

One of Associate Professor Perfors’ postdoctoral students, Dr Simon De Deyne, has created an online word association game, Small World of Words, that covers several different languages which has had over 4 million responses. It represents a huge databank of how we connect thoughts and how connections differ across languages.

“It is like a huge map of semantic space,” says Associate Professor Perfors. “It is giving us a window into how we arrange all our concepts and it gives us a new tool for asking questions about how people make generalisations.”

Of course, this new world of psychology relies on people being prepared to engage with it. But Associate Professor Perfors is confident that finding participants for the new experiments won’t be hard.

“Until now we haven’t been able to get a handle on individual differences because we haven’t had rich enough data. That is now changing, and that is massive. People want to know about themselves and that is a big motivation for them to engage.”

Banner Image: Paul Burston