Sciences & Technology

Quantum leap in computer simulation

The quantum revolution is coming, and it’s taken some big leaps of thinking from some of the biggest minds of the 20th century to get us to this point

Published 3 October 2018

The history of the quantum revolution is replete with famous names like Einstein, Bohr, Pauli, Heisenberg, Schrodinger, and others who have forever changed the way we think about the world.

The smug self-satisfaction of many physicists at the beginning the twentieth century that “there is nothing to be discovered in physics now; all that remains is more and more precise measurement” (attributed to Lord Kelvin, 1900), quickly gave way to a staggering set of advances which swept away comfortable ideas and replaced them with theories that, while proving to be extremely useful, strained to breaking point common sense ideas of what reality should look like.

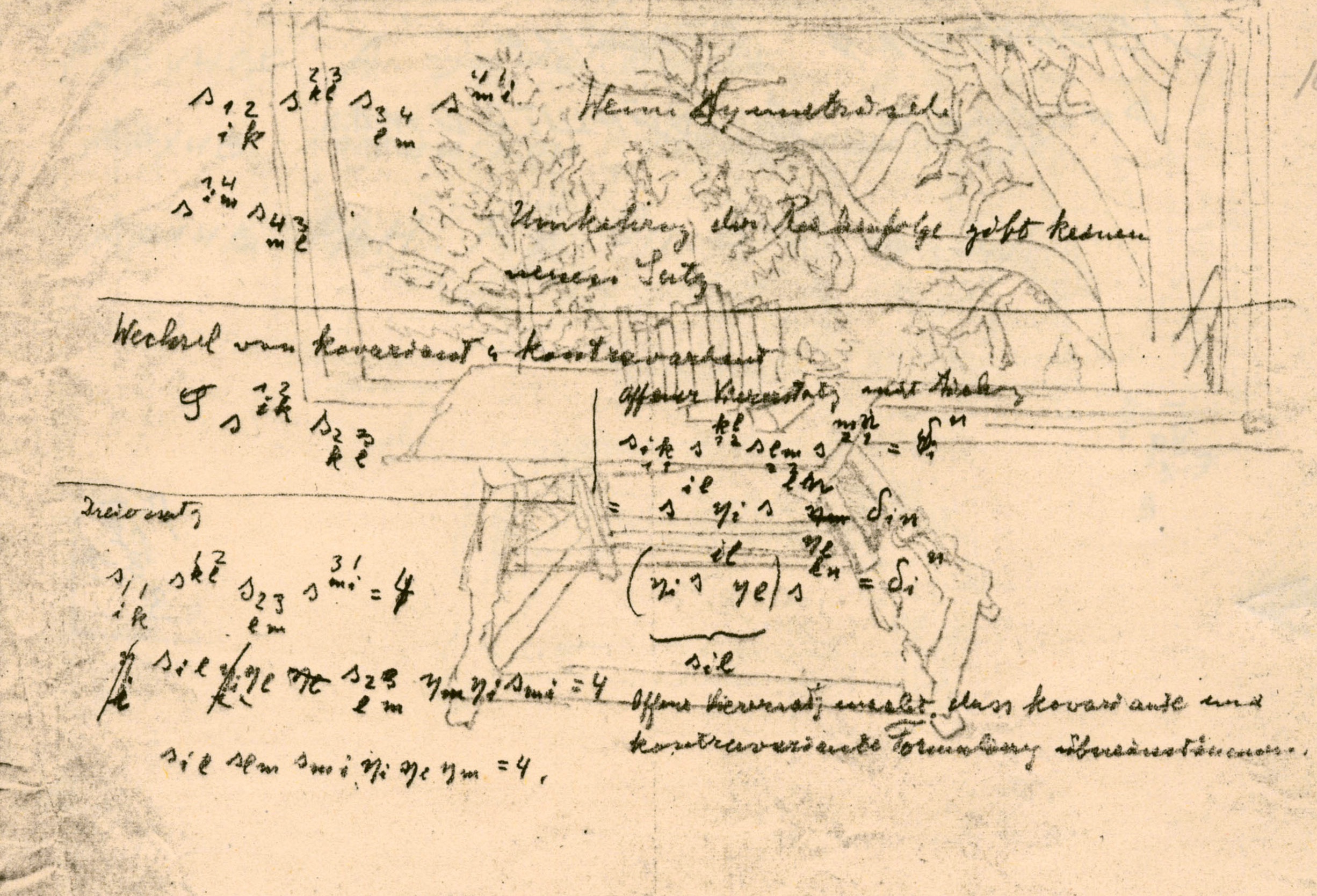

The revolution of ideas started with German theoretical physicist Max Planck and later Albert Einstein who showed that light comes in packets and the size of the packet depends on the frequency of the vibration.

Next was Frenchman Louis de Broglie who showed that not only does light act like a particle, particles can act like light – they too sometimes behaved like waves. And then there was another German, Werner Heisenberg, who found that you couldn’t even measure with certainty both the position and velocity of a particle at the same time.

So many certainties fell in the first part of the 20th century.

Sciences & Technology

Quantum leap in computer simulation

The confidence that what we measure is real; that particles and waves are fundamentally different; that time is the same for all observers; that nature is at its core predictable; and that with sufficient knowledge we can forecast how systems will evolve with certainty, was replaced by a view of reality in which the best we can do is predict probabilities.

But by the middle of the 20th century, many physicists had made their peace with the strange new way of looking at the world because quantum mechanics was just so damned useful.

Students who would complain, “But sir, it just doesn’t make sense that a particle can be in two places at the same time,” were told to “shut up and calculate”, because the power of quantum mechanics to accurately predict behaviour was staggering.

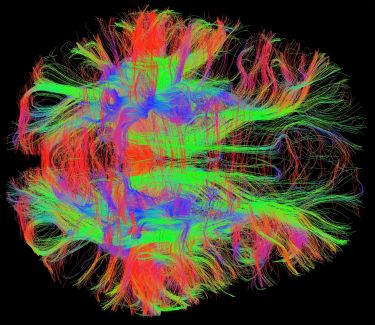

New technologies, all dependent on quantum physics, such as semiconductors and computers, lasers and communications, the internet and GPS, as well as MRI and PET imaging, emerged to the delight of a public largely unaware and unconcerned with the fact that even many physicists remain uncomfortable with the foundational interpretation of quantum mechanics.

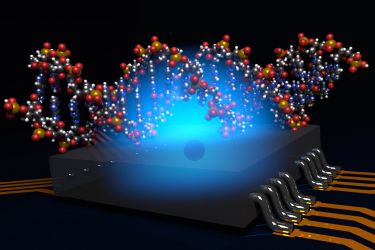

So, what are those seminal moments in the history of quantum physics that have had a direct impact on the emergence of quantum computing and quantum technology?

Well, the first emerged as a result of a desire to really understand the nature of reality.

Suppose we consider two particles, A and B, which have emerged from the breakup of a spinning molecule so that they must both be spinning in the same direction, either both up or both down, but we don’t know which. Today we would call these particles entangled.

Sciences & Technology

Quantum boost for medical imaging

Now suppose I measure one particle and find that it’s spinning up. If I measure the second particle I know I will also find that it’s spinning up and vice versa. We know from Heisenberg that just by measuring the particle we change it – but does that mean that the act of measuring A also changes the state of B?

Now Einstein famously called this ‘Spooky action at a distance’ and didn’t believe it possible.

The other option is simply that A and B carry with them a secret hidden code that, while we may not know what it is, tells the particles whether they are spinning up or spinning down.

In the 1930s we had no way of knowing the answer, and this debate seemed as pointless as asking if a tree falls down in the forest and nobody hears it, has it really fallen? This seemed much more the realm of philosophy than physics.

However, in 1964 Northern Irish physicist John Bell proposed an experiment to differentiate between these distinct possibilities. Drawing on Bell’s ideas, Frenchman Alain Aspect in 1981 performed experiments on pairs of entangled photons with unequivocal results – if two particles are entangled, it isn’t possible to measure one of them without disturbing the other.

If so, that means that B ‘knows’ somehow that A has been measured, even if A and B are separated by large distances – so large, in fact, that no signals could pass from A to B within the measurement time. This is accepting, of course, that the maximum speed of signal transmission is the speed of light.

And as hard as that is to accept using our common sense, it is the way the world is.

This might be considered by some to be a bug, but for quantum computers it is in fact a very important feature. In a classical computer, the read-out or measurement step is just a means of peering into the computer and getting the result.

Sciences & Technology

Photon teleportation: Less ‘beam me up’, more 007

The read-out doesn’t disturb the computation or add to it. But in a quantum computer, if I read out the state of one of the qubits, not only does the state of that qubit change, so too do all the qubits that are entangled with the qubit that I have read out.

So, the measurement becomes an integral part of the computation.

I might not even look at the result of the qubit that I have read out, because my intent isn’t to know what the result is, but simply to change the state of the other qubits. This is a brand new way of thinking and quantum algorithms use it to full advantage.

It brings the old philosophical chestnut of the ‘falling tree in the lonely forest’ to the technological use, just like the debate about the nature of light (is it a wave or a particle?) in the early days of quantum mechanics found practical application in lasers and computer chips.

The second defining quantum event was a talk.

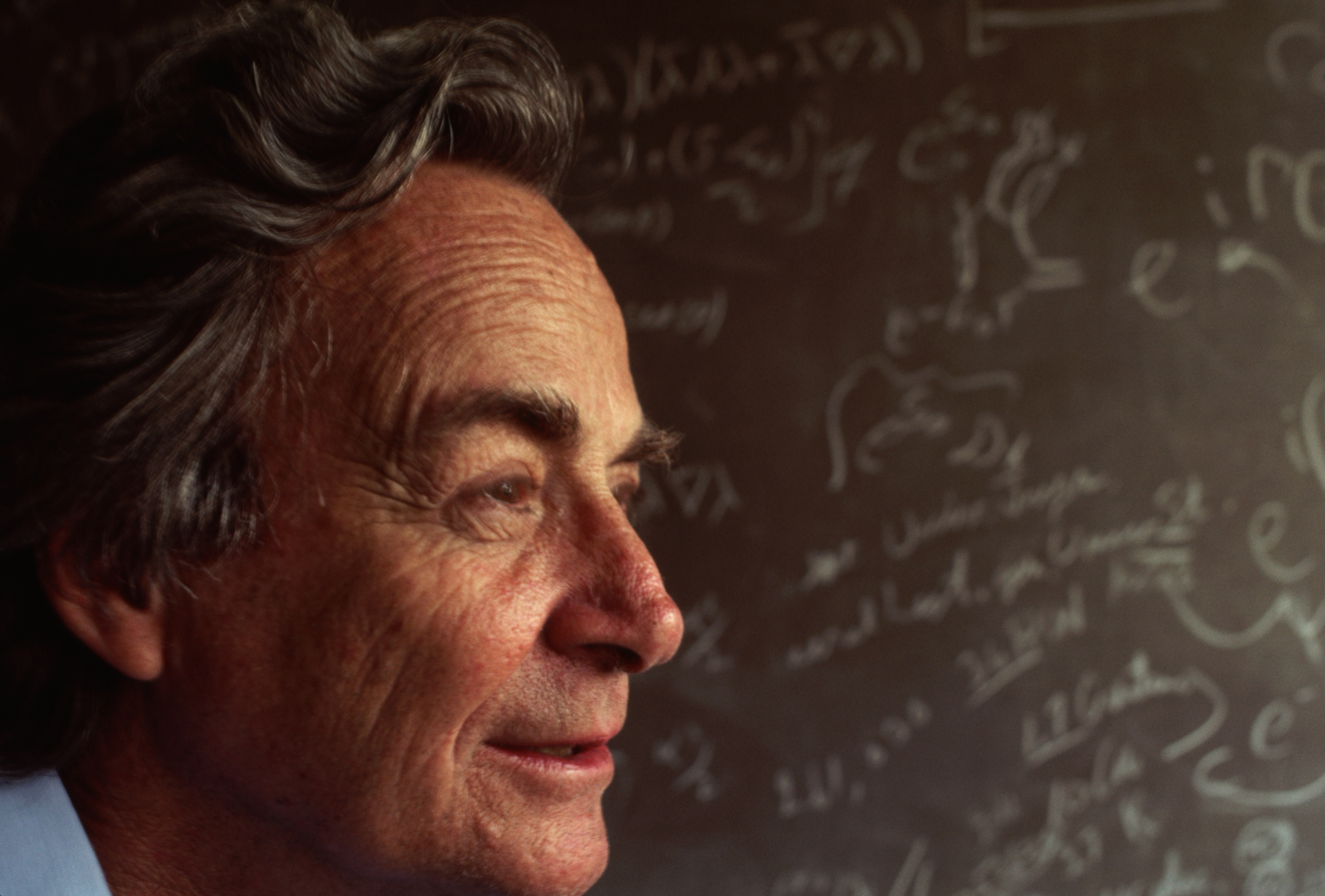

In 1959, theoretical physicist Richard Feynman asked a seemingly simple question; could we manipulate matter on the scale of individual atoms in order to create nanoscale machines, computers and new chemicals simply by arranging the atoms the way we want them?

Perhaps unsurprisingly, the talk didn’t raise a lot of interest in an era where no one had ever imaged a single atom, let alone manipulated one or arranged atoms in a pattern on a surface. All that changed in 1981 when Germans Gerd Binnig and Heinrich Rohrer used a scanning tunnelling microscope to image single atoms.

For the very first time, we could see atoms, not just infer their existence.

Sciences & Technology

A big discovery in a tiny package

In 1989, IBM scientists spelled out the Company’s name using 35 xenon atoms on a nickel surface.

Then in 2013 they released the world’s smallest movie called A Boy and his Atom, a stop-motion animation where single carbon monoxide molecules are manipulated to create the short film.

These advances excited the imagination of a whole generation of scientists.

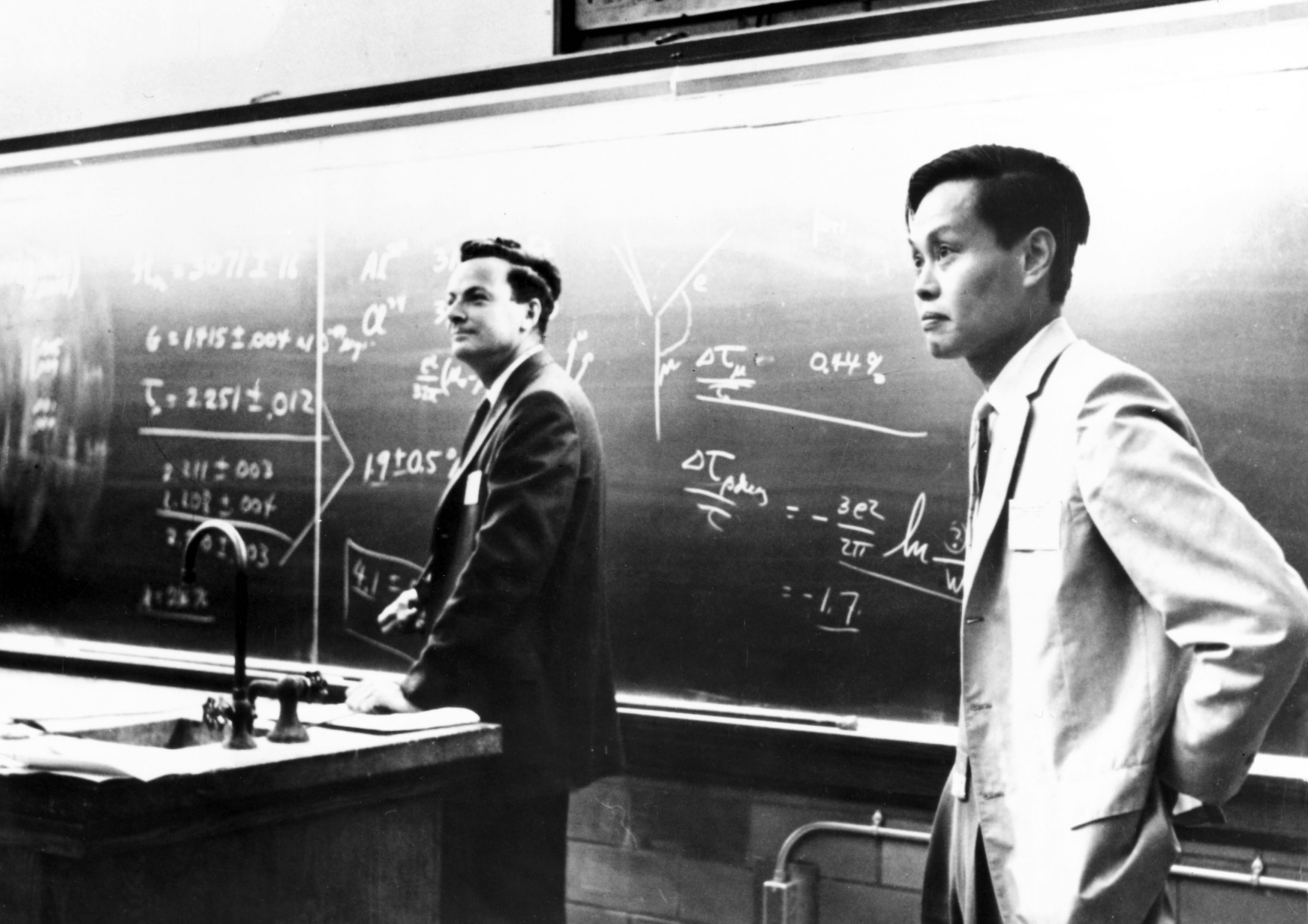

Professor Feynman’s vision was starting to be realised. One of his other visions was to create a quantum computer capable of simulating the quantum world – something he proposed almost 40 years ago at a 1981 lecture at MIT.

These are just two examples of moments in our intellectual quantum history.

The physicists involved were pioneering souls who ventured into realms that many considered either wildly impractical or philosophically intractable; but they pushed us into new ways of thinking that have ultimately resulted in technological quantum advances beyond the imagination of their creators.

And as we celebrate the past triumphs of quantum mechanics and look forward to the development of future technologies we would do well to remember the words of Richard Feynman:

“Physics is like sex: sure, it may give some practical results, but that’s not why we do it”.

Find out about University of Melbourne’s IBM Quantum Hub.

Banner: Richard Feynman with Yang Chen Ning, American physicists, c 1950s/ Getty Images