Sciences & Technology

We need to retain research integrity in the AI era

Higher education students are cautious about using generative AI and academics lack guidance, finds new report

Published 22 June 2023

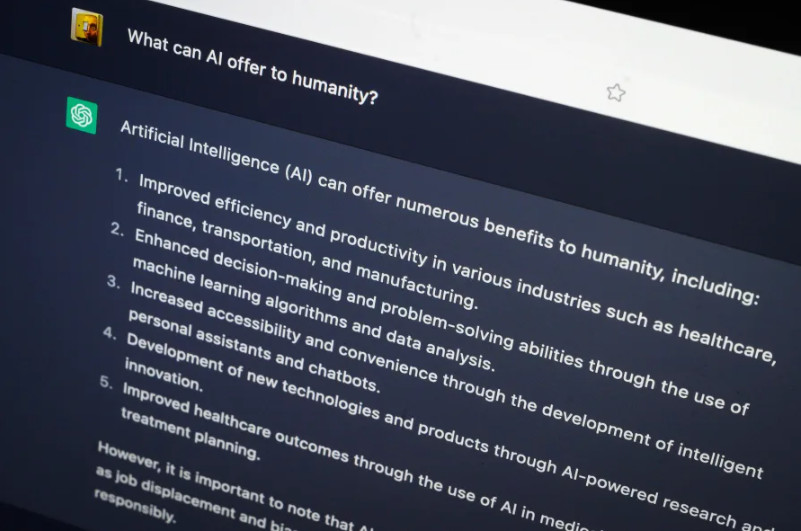

There has been intense speculation about the role of generative artificial intelligence (AI) – including Chat GPT and other large language models – in the education of university students.

Is it a powerful tool that will enhance learning or is it a threat to academic integrity?

A recently announced Federal Government inquiry – currently calling for submissions – will examine the impact the new technology could have on the Australian education system.

Sciences & Technology

We need to retain research integrity in the AI era

Since ChatGPT was released in November 2022, universities have been playing catch up in understanding the implications of this technology for teaching and learning, and, importantly, for assessment.

Academic integrity has been one of the key issues on the table: generative AI can write a believable essay and pass a medical licensing examination, so where does this leave our ability to identify what a student has learned?

What hasn’t been clear is whether students and academics are keen to jump on board with this technology, and how it is currently being used by any of them.

Our study examined this question by asking current Australian university students and academics to self-report their use of generative AI. We received 110 responses from every state and territory in Australia (which included 78 students and 32 academics).

Of the student responses, we found that while some students are ready to embrace generative AI in their university learning, many are entirely reluctant to engage with the technology.

In fact, almost half of all student respondents had not used generative AI (48 per cent).

This study, which reports on data generated between April 24 and May 23 2023, indicates that, far from jumping at the chance to gain any educational advantage by using generative AI, some students have a very thoughtful and measured response to generative AI.

The responses included concerns about the ethics of contributing to the technology, the impact on students’ individual learning skills and the longer-term impact on human thinking and learning.

One student wrote:

It is a disservice to a student’s own learning to rely on it if they wouldn’t be able to write it without developing those skills first.

Others were wary about relying too much on generative AI, given the current limitations in this generation of the technology. For example, one wrote:

Sometimes I’ve found it beneficial to get a really broad overview of a topic before honing in on a specific area. I don’t find it helpful in the formation or generation of assignments and prefer that process to be entirely self-directed.

Fewer than one in ten student respondents had used generative AI to produce content that was submitted as all or part of an assessable piece of work.

Importantly, though, our finding doesn’t indicate that students who used AI tools were necessarily cheating – many universities have policies that allow the use of content generated by AI under certain circumstances (for example, when the use is properly referenced and acknowledged).

Academics and students that had used generative AI were finding educational applications for it in relation to teaching and learning.

Students described using generative AI in a number of ways that have been previously speculated about including supporting creativity, brainstorming, refining ideas, summarising and as language support (for example, refining sentence structure), particularly for students with English as an additional language.

Politics & Society

AI apocalypse or overblown hype?

The use of conversational agents (like chat bots) to support learning has been demonstrated in previous work and our study highlighted that students particularly value the interactive nature of generative AI for this reason.

They referred to generative AI as a ‘co-pilot’, a ‘study partner’ and as an automated ‘tutor’.

For example, one said:

It makes me feel less stressed and anxious about assessments, as I almost feel as though I have a study buddy or friends to help me through.

While students generally agreed that generative AI would improve efficiency in completing tasks, academics did not anticipate net benefits to their workload as a result of generative AI.

While students grapple with multiple ways of using generative AI, around 75 per cent of academics report that they feel that their universities are still not ready for this technology.

Only a small percentage (12.5 per cent) indicated they had resources or training needed to support the use of generative AI. And several noted that policies and documentation around ‘what is allowed and what is not’ are unclear.

One provided this advice to universities:

Get your act together - each faculty shouldn’t need to join the dots.

Sciences & Technology

The AI pretenders

The data for this research project indicates that the current generation of generative AI programs has not had a transformative impact on education in universities at this early point of Semester 1 2023.

However, there are hundreds of new AI tools being made available weekly and the capabilities of current tools are constantly being refined.

Even some of the limitations identified by our participants (that Chat GPT does a poor job of identifying its own sources of information) are less of a consideration with emerging new and advanced technology that uses GPT4.

With tech giants leading the way in revolutionising high-performance, reliable and intuitive AI, universities will need to evolve as well. New opportunities for innovation in learning, teaching, assessment and research will be essential as we learn to live and work with generative AI in future.

Our study will continue to collect data as we move into Semester 2 to monitor how engagement with this technology changes over time. We want to continue to hear from current university students and staff about how they view and are using AI to learn what the future could hold.

The Generative AI in Universities survey is anonymous and can be accessed here.

Banner: Getty Images